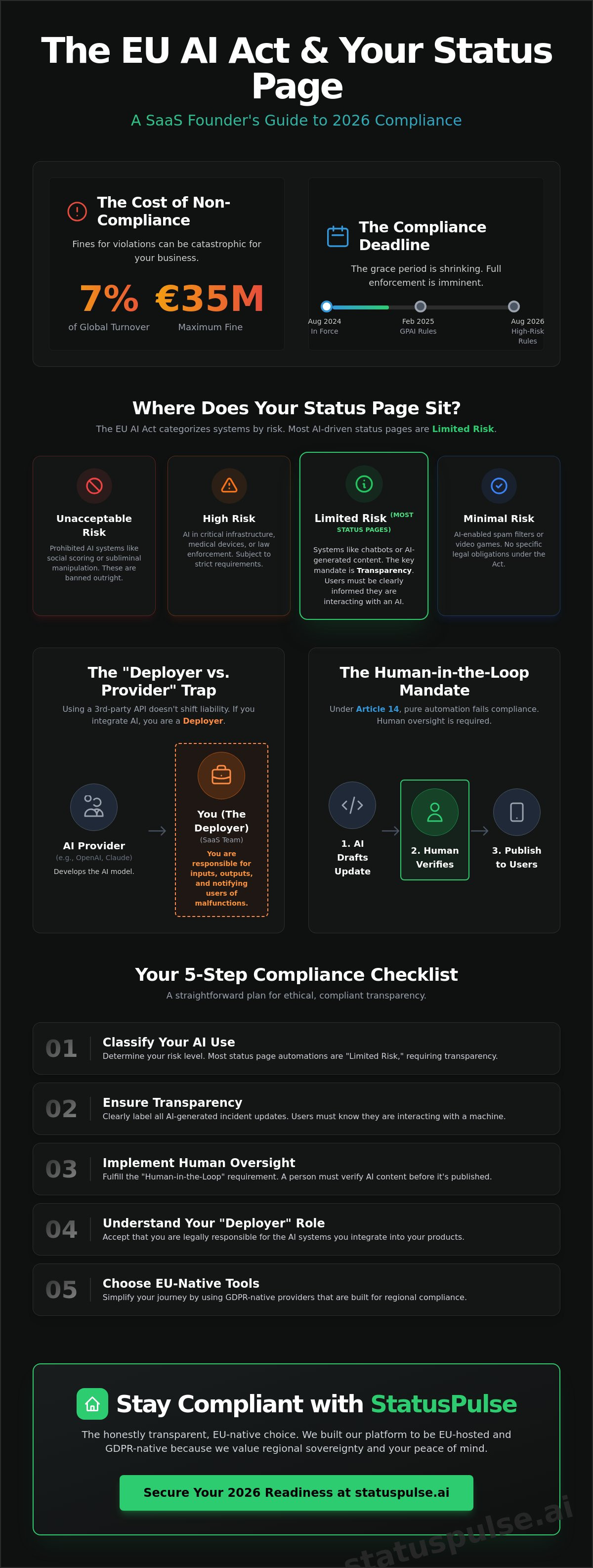

A single automated incident update could cost your company up to 7% of its global turnover by August 2026. The EU AI Act is no longer a distant theoretical framework. It is a looming reality for every SaaS team using Claude or OpenAI to draft their system alerts. You likely feel the weight of compliance fatigue. Handling another set of complex regulations while trying to scale feels like a tax on innovation. We agree that bureaucracy should not get in the way of honest communication with your users. This article explores Status Pages and the EU AI Act: What SaaS Founders Need to Know to keep their workflows legal and their transparency intact.

You will learn how to verify your current incident response process and whether your third party integrations create hidden liabilities. We provide a clear compliance checklist and a look at how regional, EU-hosted tools simplify your path to 2026 readiness. It's time to replace uncertainty with a straightforward plan for ethical, compliant transparency. No surprises. Just clear steps to protect your business and your customers.

Key Takeaways

- Prepare for 2026. Learn how the world’s first comprehensive AI framework targets any system affecting EU residents, including your incident stack.

- Classify your risk level. Understand why most status page automations fall under "Limited Risk" and the specific transparency obligations you must meet.

- Master Status Pages and the EU AI Act: What SaaS Founders Need to Know to ensure your automated updates don't lead to legal liability.

- Implement human-in-the-loop oversight. Discover why Article 14 requires a human to verify AI-generated content before it goes live to your customers.

- Build on EU-native soil. See how choosing a GDPR-native status page provider simplifies your compliance journey through built-in transparency.

Decoding the EU AI Act for SaaS Founders and DevOps

The EU's Artificial Intelligence Act is no longer a distant policy debate. It officially entered into force on August 1, 2024. For SaaS founders, the grace period is shrinking. By 2026, the full weight of this framework will hit any AI system affecting EU residents. This isn't just about training models; it is about how you use them. Understanding Status Pages and the EU AI Act: What SaaS Founders Need to Know starts with recognizing that transparency is now a legal requirement. Status pages have evolved from simple uptime trackers into essential tools for the regulatory supply chain.

The Act categorizes AI by risk. Most SaaS applications fall into the "limited risk" or "high risk" buckets. If your software uses AI to filter resumes, score credit, or manage critical infrastructure, the requirements are strict. You must provide clear information to users. They need to know they are interacting with an AI. They need to know when that AI is down. Honesty is no longer optional. It is a line item in your compliance audit.

Why 2026 is the Compliance "Panic Window"

The timeline is aggressive. Rules for general-purpose AI models become enforceable in February 2025. By August 2026, the most stringent requirements for high-risk systems take effect. The financial stakes are massive. Fines are tiered, reaching up to €35 million or 7% of total global annual turnover for the previous financial year. The EU has made its stance clear. Claiming you did not understand the technical nuances of your third-party API is not a valid legal defense. Compliance must be "native" to your workflow, not an afterthought. You need a reliable way to communicate system health to avoid the appearance of negligence.

The "Deployer" vs. "Provider" Trap

Many founders believe that using OpenAI or Anthropic shifts the liability to the AI giants. This is a dangerous mistake. In the eyes of the EU, if you integrate an AI API into your product, you are likely a "deployer." You are responsible for the inputs, the outputs, and the uptime. You cannot outsource your ethical or legal obligations to a vendor in San Francisco. Under Article 3 of the Act, a deployer is any natural or legal person using an AI system under its authority in the course of a professional activity.

- Provider: The entity that develops the AI (e.g., OpenAI).

- Deployer: The SaaS team that integrates the AI to solve a customer problem.

- Responsibility: You must monitor the system for "unforeseen risks" and notify users of malfunctions.

This is where StatusPulse fits into your 2026 strategy. When your AI integration fails, your status page tells the story. It provides the documented trail of transparency that regulators demand. We built our platform to be EU-hosted and GDPR-native because we value regional sovereignty. In the new regulatory landscape, a transparent status page is your best defense against the "I didn't know" trap. You stay in control. You press send. Your users stay informed.

Risk Categories: Where Does Your Status Page Sit?

The EU AI Act entered into force on August 1, 2024. It doesn't treat every algorithm the same. Instead, it uses a tiered system to categorize AI based on potential harm. For most developers, this is good news. The first regulation on artificial intelligence identifies four distinct risk levels: Unacceptable, High, Limited, and Minimal. Your compliance burden depends entirely on which bucket your status page falls into.

Prohibited AI systems are banned entirely. These include tools using subliminal techniques or biometric categorization. You won't find these in a standard status page setup. Most automated incident reporting falls into the Limited Risk category. However, if your SaaS serves critical infrastructure like energy or healthcare, you might face High Risk classifications. Understanding these boundaries is essential for Status Pages and the EU AI Act: What SaaS Founders Need to Know before the 2026 enforcement deadlines.

Limited Risk and the Transparency Mandate

Most AI-driven status pages live here. If you use a model to draft incident updates, you're running a Limited Risk system. The requirement is simple: transparency. Users must know they're interacting with a machine. If a bot writes your downtime summary, you must label it clearly. No hidden automations. No faking the human touch during a crisis.

Labeling AI-generated text isn't just about avoiding a fine. It's about integrity. StatusPulse believes in a human-in-the-loop approach. Claude drafts; you press send. This ensures your public page remains honest. Under the Act, AI-generated content must be detectable. Whether through a small badge or a text disclaimer, your users deserve to know the source of their information. This builds trust when things go wrong.

High-Risk Scenarios for Enterprise SaaS

The rules change for the estimated 10 to 15 percent of SaaS companies serving critical sectors. If your platform manages essential services like water, electricity, or medical data, your status page is part of a high-risk system. The EU requires these systems to have robust risk management frameworks. You can't just set it and forget it. You need technical documentation that proves your AI won't hallucinate a "system up" message during a total blackout.

Human oversight is mandatory here. Automated failover notifications must be verifiable by a real person. You'll need to maintain logs and ensure high levels of cybersecurity. This isn't just corporate bloat; it's a safety net for society. If your enterprise tool fits this description, start your documentation process now. At StatusPulse, we help you keep things simple and compliant without the enterprise price tag.

The Human-in-the-Loop: Why Pure Automation Fails Compliance

Article 14 of the regulation isn't a suggestion. It mandates meaningful human oversight for AI systems that interact with people or manage critical information. For SaaS founders, this applies directly to how you communicate during a crisis. Relying on a "black box" to manage your public-facing status page is a gamble that the EU AI Act seeks to eliminate by 2026. Transparency isn't just a marketing buzzword anymore; it's a documented legal requirement that demands a human at the wheel.

When an incident occurs, the pressure to update users is high. Pure automation feels like the solution, but it creates a significant liability trap. If your AI decides a database lock is actually "scheduled maintenance," you aren't just being vague. You're being non-compliant. Status Pages and the EU AI Act: What SaaS Founders Need to Know is fundamentally about maintaining control over the narrative to ensure accuracy and accountability. You can't delegate your legal responsibility to a script.

The Risk of AI Hallucinations in Incident Reports

Automated summaries often struggle with technical nuances. An AI might scan your server logs and misinterpret a 500ms latency spike as a minor blip when it's actually the start of a cascading failure. If your status page falsely claims a service is "operational" because an unverified AI summary missed the context, the legal costs can be steep. Misleading your customers during an outage isn't just bad for churn. It violates the transparency obligations set for 2026. Relying on unverified AI status updates risks turning a technical glitch into a legal nightmare for your compliance officer.

Drafting vs. Publishing: A Compliant Workflow

We believe in a simple model: Claude drafts, you press send. This approach ensures that human agency remains at the heart of every communication. It's a strategy that keeps you compliant while saving time. By using AI to generate the initial report from technical data, you remove the "blank page" problem during a stressful outage. However, the final "Publish" click must belong to a human who has verified the facts. This workflow honors the spirit of the law and builds trust. You can see Why Transparent Incident Communication Prevents Customer Churn by focusing on honesty over total automation.

- Human oversight must be active and informed, not a passive checkbox exercise.

- AI should assist the technical expert, never replace their final judgment.

- Documented workflows that include human review help prove compliance during audits.

- Status Pages and the EU AI Act: What SaaS Founders Need to Know requires a shift from "set and forget" to "verify then publish."

5 Steps to EU AI Act Compliance for Status Pages

Compliance isn't about bureaucracy. It's about building a foundation of trust. For Status Pages and the EU AI Act: What SaaS Founders Need to Know, the focus must shift from automated speed to verified accuracy. By August 2026, the grace period for most transparency obligations ends. You need a plan that works now. Don't wait for a regulator to knock. Build these habits into your workflow today.

- Audit every AI touchpoint: Map every instance where a model interacts with your incident data. Do you use an LLM to summarize error logs? Is a bot categorizing the severity of your API latency? Document these processes. The Act requires you to understand the logic behind your automation.

- Implement clear labeling: Transparency is the core of the new law. If a machine helped write an update, your users have a right to know. This isn't a badge of shame. It's a mark of honesty.

- Establish a human-in-the-loop protocol: Automation should assist, not replace. Every public update needs human eyes before it goes live. Use the "Claude drafts, you press send" model to stay safe.

- Maintain versioned logs: Store the original AI-generated draft alongside the final human-edited version. This creates a clear audit trail. If a summary ever misleads a customer, you'll need this data to prove your oversight process.

- Verify data sovereignty: Ensure your monitoring provider hosts data within the EU. Many US-based incumbents route your telemetry through Virginia or California. Under the 2026 framework, this creates legal friction. Choose a provider that keeps your data on European soil.

Labeling AI-Generated Incident Updates

The EU guidelines require labels to be prominent and clear. A simple footer tag like "AI-assisted draft verified by human operators" works well. For API-driven updates in your public status page UI, include a specific metadata field. This prevents confusion and keeps you compliant with Article 52. It's about being honest with your users. No surprises. Just clear communication that respects their intelligence.

Documentation and Logging Requirements

In 2026, version control applies to your words, not just your code. You must keep records of how AI models are used for transparency. This helps if a regulator ever questions an incident response. For more on building resilient systems, check out API Monitoring: The Developer’s Guide. Detailed logging proves you’ve prioritized human oversight from day one. It turns a legal requirement into a quality standard.

StatusPulse was built for this. We are EU-hosted and GDPR-native. Our AI features follow a simple rule: Claude drafts, you press send. It's an honest approach to Status Pages and the EU AI Act: What SaaS Founders Need to Know. We don't hide behind complex layers. We give you the tools to stay compliant without the corporate bloat.

StatusPulse: The Honestly Transparent, EU-Native Choice

Most status page providers are based in the US. They treat GDPR as a checkbox. We treat it as our foundation. By 2026, when the AI Act is fully enforceable, where your data lives and how your AI operates will determine your legal standing. StatusPulse is EU-hosted. It's built by a team that understands why regional compliance isn't optional for modern SaaS. We built this for developers who are tired of corporate bloat and complex pricing models.

Choosing a monitoring partner shouldn't increase your legal risk. Status Pages and the EU AI Act: What SaaS Founders Need to Know is that data residency matters more than ever. Our infrastructure is native to the EU. This means your incident data doesn't cross the Atlantic just to be displayed to your European users. We prioritize integrity over flashiness. No surprises. No hidden fees. Just honestly priced monitoring designed to work when things go wrong.

Built for the EU Regulatory Landscape

Being EU-hosted drastically reduces your compliance burden. When you use StatusPulse, you aren't fighting with complex Data Processing Agreements (DPAs) designed for US incumbents. We help you meet the transparency requirements of Article 52 directly. This article requires that users are informed when they are interacting with AI. Our system makes this disclosure simple and clear.

- GDPR-Native: Privacy isn't an afterthought. It's built into our Jamstack architecture.

- Article 52 Ready: Clear labeling for AI-assisted content ensures you stay on the right side of the law.

- Honestly Priced: We believe in fair value. Our plans are straightforward because we value your time and your budget.

Claude Drafts, You Press Send

The EU AI Act emphasizes human oversight. Blind automation is a liability. Our AI-powered incident management tool is built with this philosophy in mind. When an outage occurs, Claude analyzes your technical logs and system data. It creates a draft. It suggests a clear, professional update for your users. But it never posts on its own.

This "human-in-the-loop" system ensures you meet the high-standard safety requirements of the 2026 regulations. You maintain total control. The AI does the heavy lifting of summarizing technical jargon, while you provide the final verification. It's about speed without the risk of hallucination or legal non-compliance. You get the efficiency of AI with the accountability of a human lead. This is how you manage Status Pages and the EU AI Act: What SaaS Founders Need to Know to stay competitive and compliant.

Ready to simplify your compliance? Start building trust with StatusPulse today.

Compliance is Your Competitive Advantage

The regulatory landscape changes fast. By 2026, the EU AI Act will strictly enforce human oversight for AI systems used in critical communications. SaaS founders can't rely on "set and forget" automation anymore. You need a strategy that prioritizes regional data sovereignty and human-in-the-loop workflows. Understanding Status Pages and the EU AI Act: What SaaS Founders Need to Know is the first step toward avoiding the legal pitfalls that will soon catch legacy incumbents off guard. Compliance isn't a burden; it's a way to prove you value your users' trust.

StatusPulse makes this transition effortless. We're a small, meticulous team that built an EU-hosted, GDPR-native platform specifically for developers who hate corporate bloat. Our AI doesn't act alone; it drafts your updates, but you stay in control. It's the honest way to manage incidents without the $29 price tags or complex compliance spreadsheets. You get a reliable, transparent window into your system for a fair price. You've built a great product; don't let compliance hurdles slow your growth. Start building that trust today.

Get a compliant, honestly priced status page for €5

Frequently Asked Questions

Does the EU AI Act apply to me if my SaaS is based in the US?

Yes, the EU AI Act applies to US based SaaS founders if their AI systems are used within the European Union. Article 2 of the legislation confirms that geographic location doesn't grant an exemption. If a single customer in Berlin or Paris interacts with your AI powered status page, you must comply. This extraterritorial reach mirrors GDPR standards. Non compliance risks the same hefty fines as local European companies.

Is AI-powered uptime monitoring considered a high-risk system?

Most AI powered uptime monitoring is not classified as high risk under Annex III of the Act. High risk systems typically involve critical infrastructure, law enforcement, or biometric identification. Status pages fall under the limited risk category. This means your primary obligation is transparency. You must inform users they are interacting with AI. It's about being honest with your users, which is how we've always operated.

What happens if my AI-generated status update is incorrect?

You are legally responsible for any inaccuracies in your AI generated status updates. Article 14 of the EU AI Act mandates human oversight to prevent or minimize risks. If an AI hallucination causes a 15 percent drop in user trust or misleads stakeholders, the liability sits with the provider. That's why we use a workflow where Claude drafts and you press send. It keeps a human in the loop.

Do I need to label every single status update if I use AI for drafting?

Yes, Article 50 requires clear disclosure when users interact with AI generated content. You must label these updates to maintain transparency. This rule applies to Status Pages and the EU AI Act: What SaaS Founders Need to Know to avoid deceptive practices. A simple label near the timestamp satisfies this requirement. It ensures your users know exactly where the information is coming from without any hidden surprises or corporate fluff.

How does the EU AI Act differ from GDPR for SaaS founders?

GDPR focuses on personal data protection, while the EU AI Act regulates the safety and ethics of AI models. GDPR has been active since May 2018. The AI Act enters full force in 2026. While GDPR protects a user's right to privacy, the AI Act protects users from algorithmic bias and opaque decision making. Both require a privacy by design mindset. We built StatusPulse to be GDPR native from day one.

Can I use free AI tools for incident management under the new Act?

Using free AI tools for incident management is risky because they often lack necessary data processing agreements. Most free tiers don't guarantee the data governance standards required by Article 28 of the Act. If a free tool leaks sensitive incident data, you are the one facing the consequences. Professional, paid tools provide the legal frameworks and technical precision that incumbents often hide behind complex, expensive enterprise tiers.

What are the specific penalties for non-compliance in 2026?

Penalties for non compliance are severe and structured in three tiers. Fines for using prohibited AI systems can reach 35 million Euros or 7 percent of total global annual turnover. Most SaaS founders will more likely face fines of 15 million Euros or 3 percent for general obligations. These figures are higher than GDPR's 4 percent cap. It's a clear signal that the EU takes AI safety very seriously.

Does StatusPulse store my monitoring data in the EU?

Yes, we store 100 percent of your monitoring data on servers physically located within the European Union. We don't believe in the multi region shell game that some US incumbents play. By keeping data in the EU, we ensure your Status Pages and the EU AI Act: What SaaS Founders Need to Know compliance remains rock solid. It's about technical precision and plain spoken ethics. Your data stays where the laws protect it.